Every training session. Without fail.

Whether we are working with accountants, law firms or financial advisors, the same question comes up before we have even opened Copilot: is my client data safe?

It is the single most common concern we hear when delivering Microsoft 365 Copilot training across UK professional services firms. And honestly, it should be. If you are handling sensitive client information every day, you would be negligent not to ask.

But here is the thing. This question is not just the most common one we get. It is also one of the most important differentiators between Microsoft 365 Copilot and other AI tools on the market. The way Microsoft handles your data inside the 365 ecosystem is arguably the single biggest factor in what makes Copilot so powerful for professional services firms. You can use it with real client work, confidently, from day one.

The answer is yes. You can absolutely use Microsoft 365 Copilot with client data. Let us explain exactly why, so you can feel confident rather than just taking our word for it.

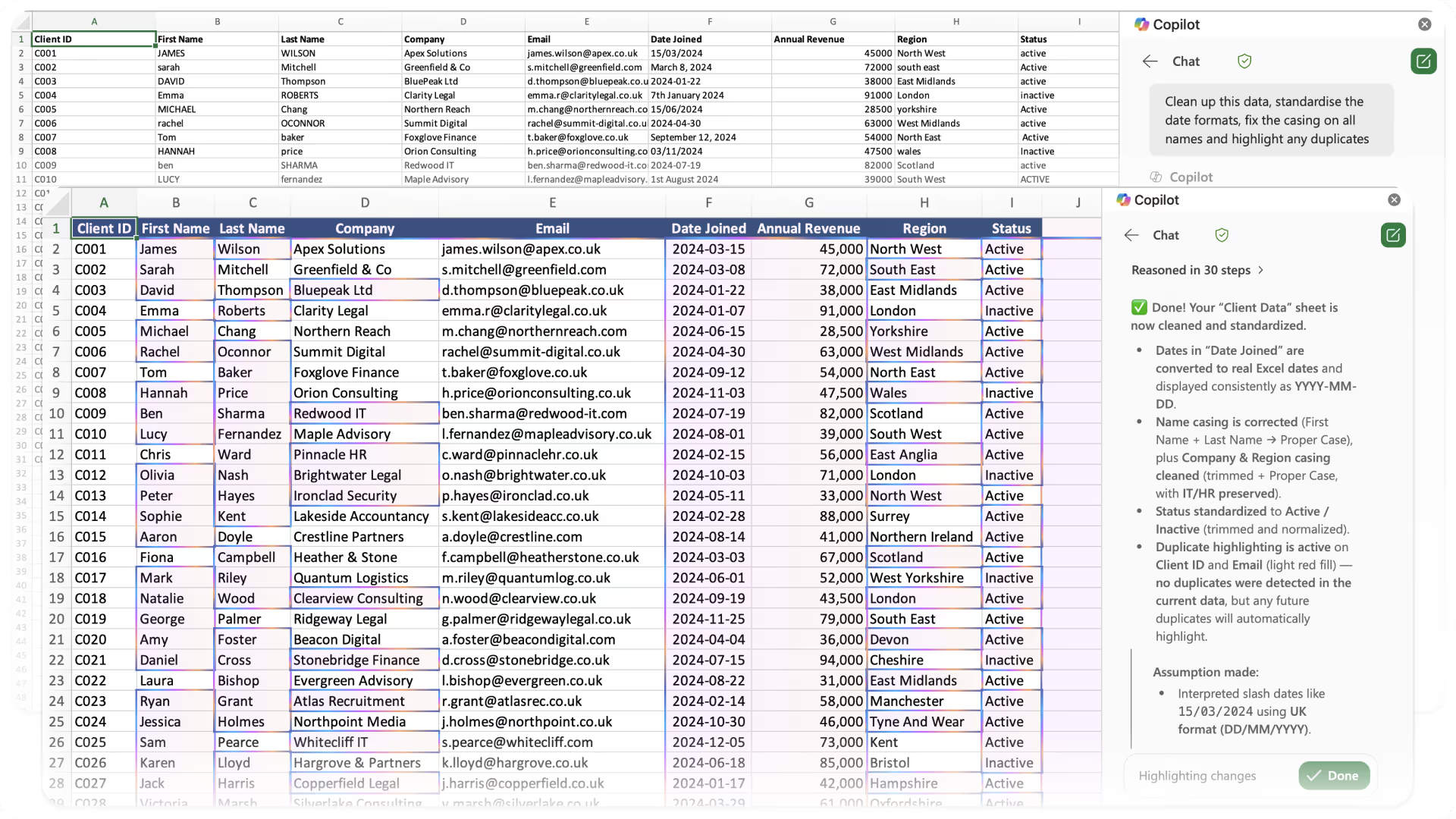

The Green Shield: Your Visual Confirmation

When you open Microsoft 365 Copilot, look at the top right of the interface. If you see a green shield icon, Enterprise Data Protection (EDP) is active. That shield is your confirmation that commercial data protection applies to this chat. Specifically, it means:

Your prompts and responses are processed within the Microsoft 365 service boundary. Your data is not exposed to public AI services or used to train public models. Your conversations are not used to train the foundation models behind Copilot. And Copilot respects the same access controls and permissions already in place across your Microsoft 365 environment.

That last point is significant. Copilot can only access content that you are authorised to view. If a colleague does not have access to a particular SharePoint site or client folder, Copilot will not surface that information to them either. Your existing security setup carries straight through.

Your Data Never Trains the Model

This is arguably the most important thing to understand about Microsoft 365 Copilot, and it is the point that comes up as a hot topic in every single training session we deliver.

Microsoft is explicit: prompts, responses and data accessed through Microsoft Graph are not used to train the foundation models powering Copilot. This is not a setting you need to toggle on. It is not limited to enterprise-tier licences. It applies to every Microsoft 365 Copilot user, full stop.

That means when your team drafts client advice, summarises meeting notes or analyses a financial spreadsheet using Copilot, none of that interaction feeds back into the model. Your client data stays yours.

It is worth noting that Microsoft may use data for service operations like abuse detection and legal compliance, but this is fundamentally different from training the AI. The foundation models that power Copilot never learn from your conversations.

This matters enormously in regulated industries. For accountants bound by confidentiality obligations, solicitors handling privileged communications or financial advisors managing sensitive portfolios, this is the reassurance that makes Copilot viable in a way that many other AI tools simply are not.

How Does This Compare to ChatGPT and Claude?

This is where the differences become stark, and it is exactly why we consider data protection to be the biggest differentiator for Copilot in the Microsoft 365 ecosystem.

ChatGPT (OpenAI)

If your team members are using the free version of ChatGPT, or even a paid Plus or Pro subscription on a personal account, their conversations are used to train OpenAI's models by default. Users can opt out manually through their settings, but the default position is that your data feeds the model.

OpenAI's Business and Enterprise plans do exclude your data from training, but these are separate paid tiers with their own pricing. The protection is not automatic. It depends entirely on which plan you are using.

Claude (Anthropic)

Anthropic updated its consumer terms in late 2025. Individual users on Free, Pro and Max plans now have a choice about whether to share their conversations for model training, but the default for new users is set to on. Users must actively opt out.

Commercial users (Claude for Work, Enterprise, API access) are explicitly excluded from data training, which is a strong position. However, it is worth noting that Anthropic processes data server-side in the US, so for firms with strict GDPR requirements or data residency obligations, this may need additional consideration.

Microsoft 365 Copilot

No training on your data. No opt-out required. No distinction between licence tiers. Your data stays within the Microsoft 365 service boundary, and Microsoft is GDPR-compliant with EU Data Boundary commitments. For UK professional services firms already operating within the Microsoft ecosystem, this is the cleanest and most compliant option available.

What You Still Should Not Do

Copilot being safe for client data does not mean you should throw all caution out the window. Some common-sense rules still apply.

Never enter login credentials, passwords or API keys into any AI tool. Copilot included. These are authentication secrets, not data to be processed.

Do not paste sensitive personal identifiers unnecessarily. If you are summarising a document, Copilot can work with the file directly rather than you copying and pasting National Insurance numbers or bank details into a chat prompt.

Review outputs before sending. Copilot is a drafting assistant, not a replacement for professional judgement. Always check what it produces, especially in client-facing communications.

Ensure your Microsoft 365 permissions are properly configured. Copilot inherits your existing access controls, which is brilliant, but only if those controls are set up correctly in the first place. If everyone in your firm has access to everything on SharePoint, Copilot will surface all of it. This is a governance consideration worth reviewing before any Copilot rollout.

Why This Matters for Your Firm

The firms getting the most value from AI right now are the ones that moved past the fear and started using Copilot confidently with real client work. They are drafting engagement letters faster, summarising lengthy documents in seconds and preparing client communications with less friction.

The firms still hesitating are often stuck on this single question: is it safe? We hope this post gives you the clarity to move forward. If you are also wondering what you get with and without a Copilot licence, our Copilot Basic vs Premium breakdown covers the full feature comparison.

If you would like to explore how Microsoft 365 Copilot could work within your firm's compliance requirements, or if you would like to book a training session for your team, get in touch with IQ IT today.

Ready to get more from Microsoft 365?

Book a free consultation to talk through where you are and where you want to be. No pressure, no hard sell. Just an honest conversation.